Published April 24, 2018, Los Angeles Daily Journal – Last month, two U.S. citizens lost their lives to autonomous vehicles: Elaine Herzberg, who was struck by a self-driving Uber in Tempe, Arizona and Walter Huang, whose autonomously driven Tesla Model X hit a freeway concrete divider in Mountain View, California. The two incidents ignited a fiery debate on the issue of autonomous vehicles, and their appropriateness for 2018 consumption.

The first autonomous fatality was in May 2016, where Joshua Brown, a former Navy SEAL, was killed when his Tesla Model S collided with a semi-truck, while the vehicle was being operated in Autopilot mode. The two recent fatalities have only served to underscore how far we still have to go.

Officially, the National Highway Traffic Safety Administration has created five categories for self-driving technology: Level 1 (hands on), Level 2 (hands off), Level 3 (eyes off), Level 4 (mind off) and Level 5 (steering wheel optional). Presently, most carmakers that offer autonomous features, such as Tesla’s “Autopilot,” are Level 2 technology. For instance, the redesigned Cadillac CT6 will introduce GM’s new “Super Cruise” technology, a Level 2 system that will allow the driver to take their hands off the steering wheel in limited highway settings.

Audi claims that its new A8 will be the first production vehicle with Level 3 autonomy. At speeds of up to 37 miles per hour, the vehicle will accelerate, steer and brake on its own, without requiring the driver to take back control on regular brief intervals; when the vehicle can no longer ensure safe operation, such as in hazardous driving conditions or at higher speeds, the car will signal that the driver will have 10 seconds to take back control. The company claims that in 2020 it will introduce a Level 4 vehicle, which will offer hands-free driving at highway speeds, with the vehicle being capable of executing lane changes and passing cars independently.

Autonomous technology undoubtedly presents a score of societal goods. Ambulatory and infirm citizens can finally experience freedom in the way that most take for granted. And, in theory, roadways filled with self-driving cars would reduce accidents by removing human error from the equation. Traffic would also be conceivably thinned, as connected cars communicate with one another, eliminating the lag time inherently embedded in traditional motoring.

Yet, advancement comes at a cost. Autonomous driving is far from perfected, and nirvana is quite some time away. Tesla claims that if you are driving a car equipped with Autopilot hardware, you are 3.7 times less likely to be involved in a fatal accident. Yet, this statement appears to be based on fuzzy math.

In this comparison, Tesla compares its Autopilot crash rate to the overall U.S. traffic fatality rate — which includes bicyclists, pedestrians, buses, and semi-trucks. Using a more appropriate apples-to-apples comparison of the fatality rate for passenger cars and light trucks compiled by the Insurance Institute for Highway Safety, the Tesla Autopilot driver fatality rate is almost four times higher than that of a typical passenger vehicle.

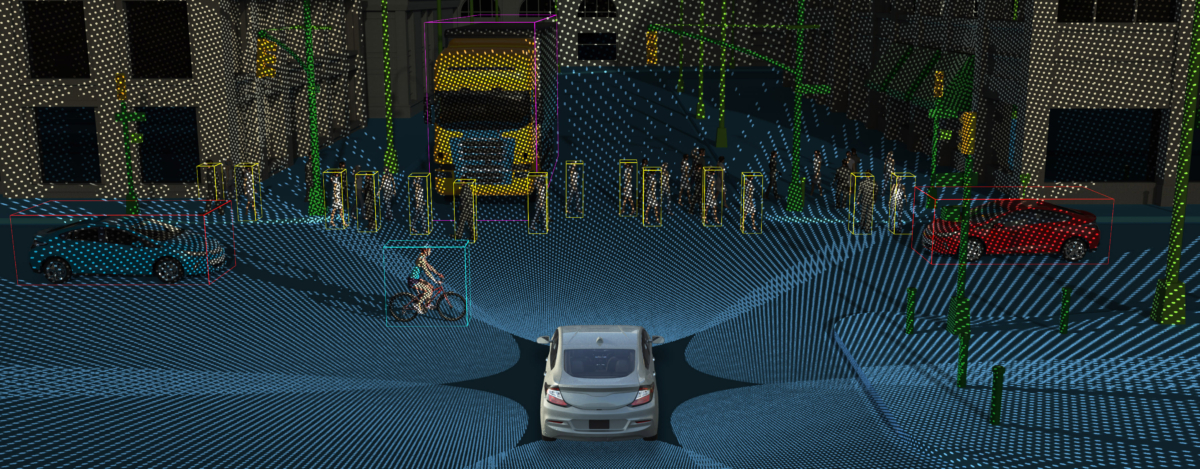

The recent Uber fatality in Arizona provides an understanding as to why. Autonomous vehicles generally operate using lidar (light detection and ranging) systems. Using rapid pulses of laser light, and measuring the amount of time it takes for each pulse to bounce back, lidar systems paint a 3-D picture of the world around it. Yet, even the best lidar systems work a bit like the game Battleship; the laser pulses have to land on enough parts of the object to give an understanding of its shape. This can be particularly difficult for if the vehicle is moving at high speed, or if an object is moving perpendicular to the vehicle, as was the case with recent Uber fatality.

When struck by the autonomous Uber vehicle, Elaine Herzberg was walking her bike in a wide-open roadway, perpendicularly to the vehicle that hit her. Video footage from the event shows that the car did not brake or steer away from pedestrian. The fact that the vehicle’s lidar system failed so dramatically reveals just how infantile the technology is, despite society’s wishes to the contrary. The hard truth is that lidar systems are just far less likely to detect objects that are moving perpendicularly to a vehicle than the average human.

Then there is the matter of driving too perfectly. Until we have a system that is thoroughly homogenous, we will suffer with having motorists with differing intentions: Humans will want to get to their destination as quickly as possible, while being moderately safe, and fully autonomous vehicles will want to be fully compliant with motoring laws. Self-driving cars will make complete stops at all stop signs and drive no faster than the posted speed limit. The problem is that no one else does.

According to information maintained by the California Department of Motor Vehicles, autonomous vehicles have been involved in 66 accidents since 2014, most of which occurred because the vehicles were driving too safely. Companies involved in developing self-driving cars are trying to determine how to make autonomous cars integrate better with their human-operated counterparts.

Yet, this is a riddle without an answer. Carmakers can never develop an autonomous car that intentionally speeds or roll stop signs. If a citizen were to be killed by an autonomous vehicle that was designed to break the law, the manufacturer could bet its bottom dollar that it would be facing a stiff award of punitive damages. No company with a care of self-preservation would ever put themselves such a compromised position.

Until we have roadways that are all autonomous, we will continue to blend cars operated with human judgment with ones who operate true to their petri dish beginnings. And disaster of various degrees will persist. Autonomous technology is far from perfected, but the end result will benefit generations who have yet to even be conceived. Elaine Herzberg and Walter Huang were unfortunate casualties in the necessary road to technological perfection. And they unfortunately will not be the last, but in the end it will be a road worth traveling.